Understanding the Turing Test and Why It Still Matters

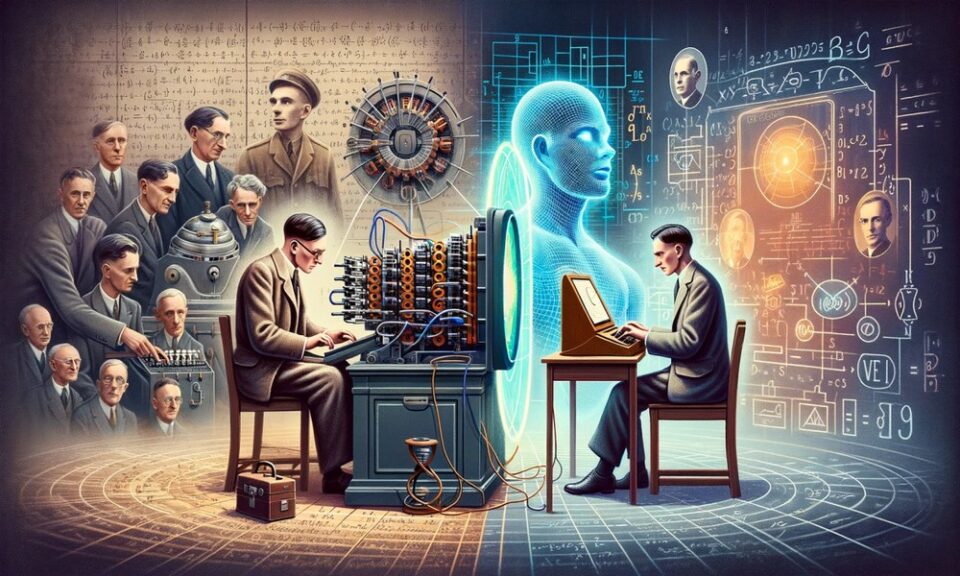

The Turing Test is one of the most widely discussed benchmarks in the history of artificial intelligence. Proposed by Alan Turing in 1950, the test was designed to explore a simple but powerful question: can a machine behave in a way that appears intelligent to a human evaluator?

In the classic setup, a human judge interacts with two unseen participants through text, one human and one machine. If the judge cannot reliably distinguish the machine from the human, the machine is said to have passed the test. Although modern AI systems are measured using many advanced metrics today, the Turing Test remains an important conceptual benchmark because it connects machine intelligence with human-like conversation and reasoning.

For learners exploring AI foundations through an artificial intelligence course in bangalore, the Turing Test offers a useful starting point for understanding how intelligence in machines has been defined, debated, and evaluated over time.

What the Turing Test Actually Measures

The Turing Test does not directly measure whether a machine truly understands concepts the way humans do. Instead, it evaluates whether the machine can produce responses that appear intelligent in conversation.

This distinction is important. A machine may generate convincing answers by identifying language patterns, predicting likely responses, and maintaining context without having human-like consciousness or emotions. The test focuses on observable behaviour, not internal thought processes.

In practical terms, the Turing Test examines abilities such as:

Natural Language Communication

The machine should understand prompts and produce meaningful, context-aware replies in human language.

Consistency in Conversation

It should maintain coherence across multiple exchanges and avoid obvious contradictions.

Adaptability to Different Questions

The system should respond to a wide range of topics, including reasoning, opinions, and common-knowledge questions.

Human-Like Interaction Style

The response should sound natural enough that a human judge does not immediately identify it as machine-generated.

These aspects make the Turing Test an early benchmark for conversational intelligence, especially relevant in the age of chatbots and virtual assistants.

Strengths of the Turing Test as an AI Benchmark

Even though the Turing Test is old, it still offers educational value and practical insight into AI evaluation.

One major strength is simplicity. The framework is easy to understand, even for beginners. It provides a human-centred way to assess AI systems, especially language-based systems that interact with people directly.

Another strength is its focus on real-world communication. Many AI systems today are used in customer support, education, healthcare triage, and productivity tools. In these use cases, the quality of interaction matters. A system that communicates clearly and responds appropriately is often more useful than one that performs well only on technical benchmarks.

The Turing Test also encourages interdisciplinary thinking. It brings together computer science, linguistics, psychology, and philosophy. This helps learners understand that AI is not only about coding models but also about how humans perceive intelligence and communication.

Limitations and Criticism of the Turing Test

Despite its importance, the Turing Test has several limitations and should not be treated as a complete measure of intelligence.

First, it can reward imitation rather than understanding. A machine might pass by mimicking conversation patterns without any deep comprehension. This means that strong test performance does not necessarily prove reasoning ability, factual accuracy, or genuine problem-solving skills.

Second, the test depends heavily on the judge, the format, and the duration of interaction. A short conversation may not reveal weaknesses, while a longer one might expose inconsistencies. This makes outcomes less standardised compared to modern benchmark datasets.

Third, the Turing Test focuses mostly on language interaction. Intelligence, however, includes much more than conversation. It includes perception, planning, learning from experience, handling uncertainty, and making decisions in dynamic environments.

For example, an AI system used in fraud detection or medical image analysis may be highly effective, even though it is not designed to hold a human-like conversation. Such systems would not be fairly evaluated through the Turing Test.

The Turing Test in the Context of Modern AI Evaluation

Today, AI systems are usually evaluated using task-specific benchmarks. These include accuracy, precision, recall, F1 score, response latency, robustness, and domain-specific performance metrics. For language models, evaluations may include reasoning tests, factuality checks, safety assessments, and instruction-following scores.

Even so, the Turing Test remains relevant as a conceptual benchmark because it raises a central question: what does it mean for a machine to appear intelligent to humans?

This question is especially useful in education and product design. Developers building AI assistants, tutoring bots, or support agents still care about human perception, trust, and usability. A system may score well on technical metrics but fail in user interaction if its responses are unclear, inconsistent, or unnatural.

That is why the Turing Test continues to be discussed in AI classrooms and industry conversations. It may not be the final benchmark, but it is an important historical and practical reference point. Students learning through an artificial intelligence course in bangalore can use it to connect foundational AI theory with modern applications in conversational systems.

Conclusion

The Turing Test benchmark remains a valuable way to understand the early thinking behind the evaluation of artificial intelligence. It measures whether a machine can imitate intelligent human conversation well enough to avoid detection by a human judge. While it has clear limitations and cannot fully capture intelligence, it still plays an important role in AI education and discussion.

In modern AI, the best approach is to treat the Turing Test as one lens among many. It helps explain human-centred evaluation, while newer benchmarks provide more precise and technical performance measurement. Together, they offer a more complete view of what intelligent machine behaviour means in practice.